TL;DR As AV systems evolve, regression tests can still “pass” while quietly no longer validating the behavior they were created to check. This blog explores how Foretellix is using AI to detect when test intent drifts, helping teams keep scenario-based validation meaningful, scalable, and trustworthy over time.

As autonomous vehicle systems grow more complex, closed-loop test scenarios must adapt without becoming invalid. This blog introduces how Foretellix is addressing the hidden problem of “intent drift” in regression testing to keep validation meaningful and reliable over time, based on a natural language intent description interpreted and evaluated with the help of AI.

Regression testing is one of the cornerstones of autonomous vehicle (AV) development. Once a regression test scenario is created, whether from real-world recordings or by using synthetic simulations, it typically becomes part of a nightly or weekly test suite. The expectation is simple: if the test passed yesterday, it should still pass tomorrow, confirming that system updates haven’t broken existing capabilities and that the test challenges the system according to its intended purpose.

But in practice, things are not that straightforward. As the AV stack evolves, the same scenario can start producing results that look fine on the surface but no longer reflect what the test was meant to validate. This hidden drift in “test intent” can quietly erode the reliability of entire test suites.

When Tests Lose Their Purpose

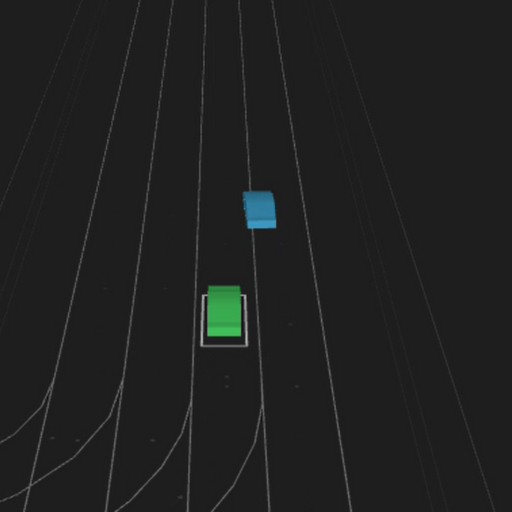

Consider a test scenario derived from real-world driving data that was added to the test regression suite. In the original recording, the ego vehicle is traveling at 60 km/h when another car abruptly merges into its lane about 10 meters ahead. This provided a valuable test of the AV’s ability to handle cut-ins.

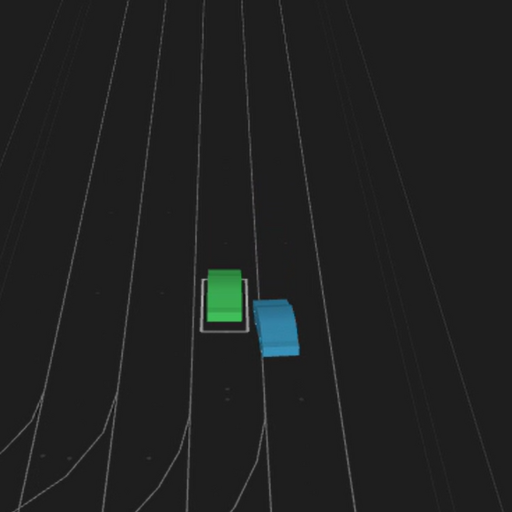

Now let’s think ahead to a point when there is a new release of the AV stack and the ego vehicle drives the same stretch at 80 km/h instead. With the higher speed, the cut-in vehicle will now change lanes behind the ego vehicle. The test may still run, and the system under test (SUT) may still behave correctly within the new conditions, but the original purpose of the scenario (to verify safe cut-in handling) has been lost.

Why Current Approaches Fall Short

Today, teams rely on three main approaches to address this problem, neither of which is scalable.

- Manual review: Engineers load runs, inspect actor trajectories, and try to determine whether the intended interaction really happened. This is time-consuming, subjective, and unworkable at scale.

- Rigid rule-based checks: Some organizations attempt to encode conditions (e.g., “cut in if the distance is less than X meters”). This works for very simple behaviors, but as soon as the intent becomes more complex, the rules quickly multiply and become hard to manage. On top of that, rules must be developed separately for each test, making the approach inherently unscalable.

- Relative or event-based design: For concrete scenarios, it is possible to define relative trajectories (e.g., in relation to the ego vehicle) or to trigger events based on conditions rather than fixed timings. This helps preserve intent in many hand-crafted simulations, but it doesn’t carry over to test scenarios derived from real world driving data, where trajectories are already fixed. Converting recorded behavior into relative or event-based logic is extremely difficult, and even when successful, the resulting scenarios often become highly complex and difficult to manage.

Neither approach provides the consistency and scalability required for continuous integration and large-scale validation pipelines.

A New Approach: Detecting Test Intent Automatically

Foretellix is developing a solution that reframes the problem. Instead of relying on static checks or human review, intent is captured in natural language and evaluated automatically with AI.

Here’s how it works:

- The engineer or system specifies the purpose of a scenario in plain English. For example, “verify a cut-in maneuver at 80 km/h with a time-gap of 1 second or less.”

- After the run completes, an AI-based detector analyzes the scenario outcome.

- The system reports whether the intent was achieved, provides a confidence score, and explains the reasoning in clear language.

This creates a repeatable, objective process that can be applied across large suites of tests. Engineers no longer need to comb through visualizations; they get a direct answer on whether a scenario is still exercising the behavior it was designed to check.

Just as importantly, the results can be aggregated across thousands of tests, giving teams a statistical view of how well their entire nightly regression suite is maintaining its test intent.

An Example in Practice

Take again the cut-in scenario. In the original run, the merging car successfully cuts in front of the ego vehicle, triggering the expected response. After a software update, however, the ego accelerates and passes before the maneuver occurs.

Under traditional regression testing, this might appear as a passing test: the SUT behaved correctly under the new conditions. But the original validation goal has silently vanished.

With intent detection, the system flags this outcome as “intent not achieved,” along with a confidence level and a human-readable explanation. Engineers know immediately that the test no longer serves its purpose and can take corrective action.

Looking Forward: Preserving Intent, Not Just Detecting It

Detecting when scenarios lose their purpose is the first step, and it is the focus of our work today.

We have also run early experiments with optimization technologies that could, in theory, adjust actor parameters to preserve intent with minimal changes. For instance, in a cut-in example, this might mean slightly increasing the merging vehicle’s speed so that the maneuver still takes place, even if the ego vehicle accelerates.

This is a complex problem, but solving it means regression suites will remain robust and trustworthy even as the AV stack evolves.

Part of a Broader AI-First Toolchain

This work fits into a larger effort to make AV validation smarter and more adaptive. By applying AI across the toolchain, Foretellix is enabling scenario-based testing that can reason about intent, adjust to changing conditions, and provide transparent explanations.

The ultimate goal is to reduce manual effort, lower costs, and increase confidence in validation outcomes, ensuring that safety-critical behaviors are continuously tested, not lost in the noise of system updates.

Safeguarding Validation Against Intent Drift

Scenario-based testing is only as strong as the intent behind each test. When intent drifts, test suites lose their power to validate the behaviors that matter most.

By developing AI-based methods to detect and preserve test intent, Foretellix is helping AV teams maintain reliable, scalable regression pipelines. The result is faster validation cycles, stronger safety arguments, and greater trust in the path toward deployment.

Foretellix is your go-to partner for understanding if and potentially ensuring the intent of tests is kept over time. Contact us today to learn more.