What is Physical AI?

TL;DR Physical AI is the evolution of artificial intelligent systems that have moved from the cloud and into the physical world in a moving machine that can interact with the world around it. There are multiple stages needed for Physical AI machines to make sense of the world and to operate and move:

- Sensors to collect the data about the world around it

- Perception system to make sense of the sensor data

- Prediction to estimate next actions of other actors in the physical world

- Planning to make decisions

- Control to take actions

- Machine to actually move

In end-to-end physical AI systems steps 2-5 are part of a single neural AI process. These technologies the machines use allow them to move beyond traditional rule based software logic if this then that (IFTTT) to systems capable of predicting future world states and making human-like decisions.

Key Takeaways

- Physical AI shifts AV/AD development from reactive rule-based logic to predictive world models that understand physical priors and social contexts.

- High-fidelity simulation and 3D Neural Reconstruction are required to create the dense, multi-modal datasets needed to train and validate perception systems against rare edge cases.

- Scaling validation requires a unified workflow that integrates real-world drive logs with automated scenario generation and reactive behavioral models.

What is Physical AI and how does it define the next generation of autonomous embodiments?

Physical AI represents a shift toward intelligent systems that are embodied in physical forms such as autonomous vehicles, humanoids, drones, and factory assistants. Unlike legacy rule-based software, these systems must predict environmental changes and reason through complex interactions in real-time. This transformation requires models that can interpret multi-modal sensor data and execute actions within physical constraints.

Foundational Frameworks for Embodiments

The architecture of these systems is built on open frameworks like Universal Scene Description (OpenUSD) to standardize 3D data. This standardization allows developers to build accurate digital twins that remain consistent from initial training to deployment. These frameworks support diverse embodiments, from heavy mining equipment to logistics robots and passenger vehicles.

World models act as the backbone for these platforms, providing the capacity to understand the physical world. Models such as those in the Foretellix physical AI toolchain allow systems to generate action-conditioned predictions. This allows a machine to foresee the consequences of its maneuvers before executing them.

Transitioning from Rules to Learning

Legacy autonomous systems often relied on rigid human coded IFTTT logic which fails to scale in the infinite variability of the real world. Physical AI utilizes generative models to handle the long-tail distribution of events. This move toward end-to-end AI models necessitates new methods for full-stack validation rather than component-level testing.

Why is a hybrid of simulation and road testing the ideal approach for Physical AI safety?

Physical AI safety cannot be achieved through real-world road testing alone because critical edge cases occur too rarely to validate performance with confidence. A hybrid approach combining high-fidelity simulation with physical testing is the ideal solution for scaling development. This allows engineers to safely and repeatedly test dangerous scenarios while grounding the results in real-world data.

Limitations of Solo Road Testing

Traditional road testing is slow, expensive, and often incomplete for modern AV/AD stacks. Collecting enough data for 100% confidence in the real world is statistically impossible within reasonable timeframes. Furthermore, real-world data can become obsolete if sensor hardware or mounting positions change, necessitating expensive re-collection.

Simulation as a Design Driver

High-fidelity sensor simulation allows for early validation long before hardware designs are frozen. This flexibility ensures that perception algorithms are robust against environmental noise like heavy snow or rain before a single physical mile is driven.

How does the Foretellix toolchain accelerate the scalable development data flywheel?

The Foretellix Physical AI Toolchain accelerates the scalable development data flywheel by automating the curation and extraction of high-value scenarios from massive datasets. Without the right data, the task of testing becomes inefficient and bloated with uneventful miles. By focusing on safety-critical events, the toolchain reveals hidden bugs and prioritizes resources for closing performance gaps.

Automated Scenario Curation

A critical component of the flywheel is scenario curation, which transforms unstructured logs into targeted training data. This automated methodology identifies rare real-world edge cases that are essential for both AI training and validation. The process removes perception noise and uninformative background frames to focus on meaningful interactions like near-collisions.

Scenario Variation and Enrichment

Once gaps are identified, the Foretellix toolchain generates millions of synthetic variations of those events. Foretellix’s physical AI toolchain creates hyper-realistic scenarios at scale. These unscripted tests expose unknown driving edge cases that would otherwise take years of physical driving to encounter.

Unified Coverage and Metrics

Objective measurement is achieved through Coverage and Checkers. Coverage goals ensure the system has been exposed to the full range of conditions within its ODD (Operational Design Domain). Checkers assess pass or fail criteria based on formal system requirements, providing traceable evidence for safety cases.

What role do 3D neural reconstruction and reactive actors play in validating embodiments?

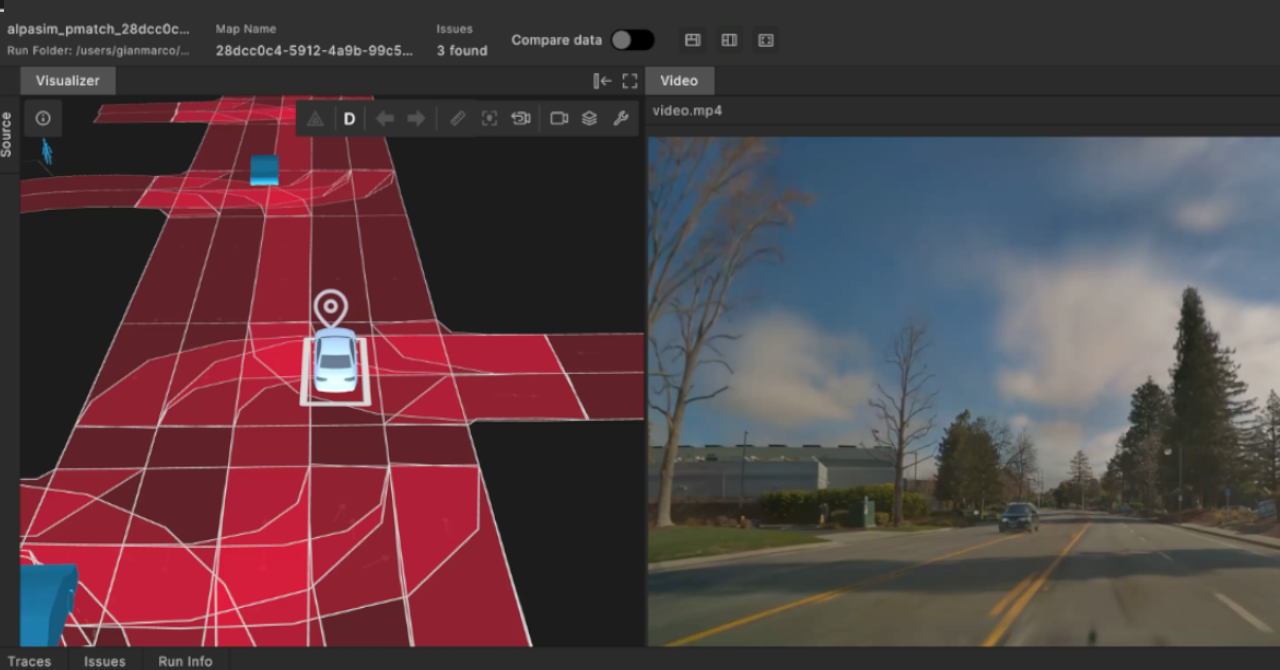

3D Neural Reconstruction and reactive actors improve model robustness by creating interactive, physically grounded digital twins that adapt to the system’s decisions. Neural reconstruction converts real-world drive logs into photorealistic 3D scenes using Gaussian representations. This allows engineers to “replay” a real-world event but with different sensor placements or unscripted traffic behaviors.

FAQ

How does Foretellix help my team move toward end-to-end (E2E) AI models?

Foretellix provides the necessary closed-loop validation environment to test E2E models, where perception and planning are tightly coupled.7 By providing sensor-inclusive simulation and reactive traffic agents, the toolchain ensures that perception inputs directly translate into safe planning and control decisions in a deterministic workflow.3

How does Foretellix specifically improve the efficiency of my data-driven testing workflow?

The Foretify Physical AI Toolchain automates the curation process, allowing your team to skip billions of uneventful miles and focus on safety-critical edge cases. By identifying and closing ODD gaps with synthetic variation, you can accelerate the scalable development data flywheel and reduce time to market.