More than 350 years ago, Sir Isaac Newton formulated the theory of gravity and laws of motion. These include the relationships between variables such as speed, time, distance, and acceleration that natural motions universally adhere to. Many years later, ASAM (OpenSCENARIO®2.0.0), VVM (Pegasus family), and ISO 34501 introduced a scale of distinct scenario description levels to differentiate between the available scenario creation styles and technology generations. The scale is called “scenario abstractions levels.” In our ongoing discussions with users, we realize there is still some confusion about the levels and what is required to make them useful for users. In this blog, we define the abstraction levels, explain how to attain the productivity and robustness promised by both logical and abstract scenarios, and even acknowledge Isaac Newton’s contribution to impactful ADAS and ADS V&V projects ?.

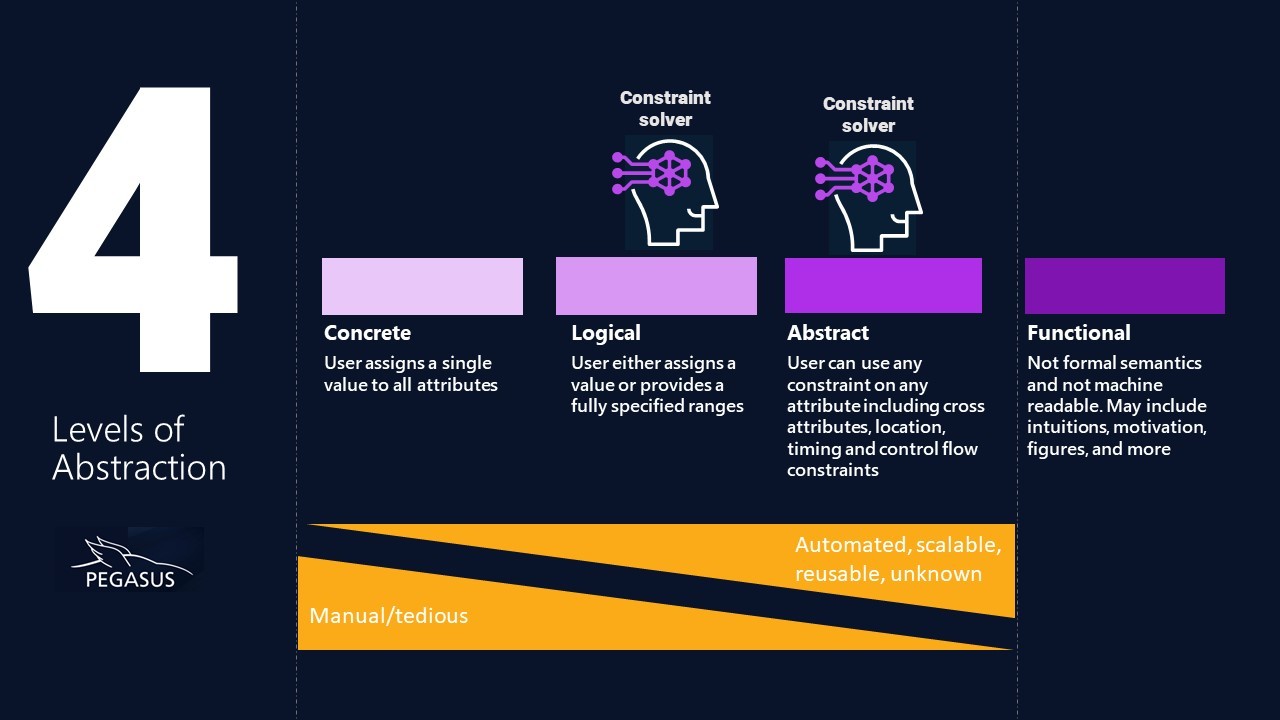

What are the four levels of abstraction?

The abstraction levels define a spectrum of scenario descriptions and their related capabilities. Each level adds more scenario control and automation over the previous. While the first three levels of abstraction are formal and intended to be compiled by tools, functional description is geared toward people reading and understanding and thus may include free English, supporting images, and more. The definition of the four abstraction levels is straightforward.

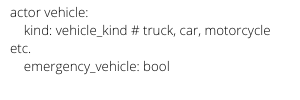

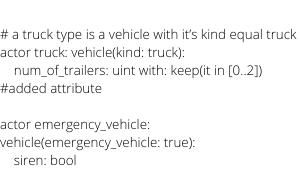

- Concrete scenarios allow only attribute value assignments

- Logical scenarios allow assignments or attribute value selection according to distribution from fully specified ranges

- Abstract scenarios allow any possible constraints, including ranges and distributions, cross attributes, high-level location specification, and timing constraints.

- Functional scenarios – Geared for humans. Not a formal description; thus, not machine-readable.

If you know the level of abstraction basics, feel free to jump to the next section. If not, here are more details.

The four levels of abstraction are illustrated in the figure below:

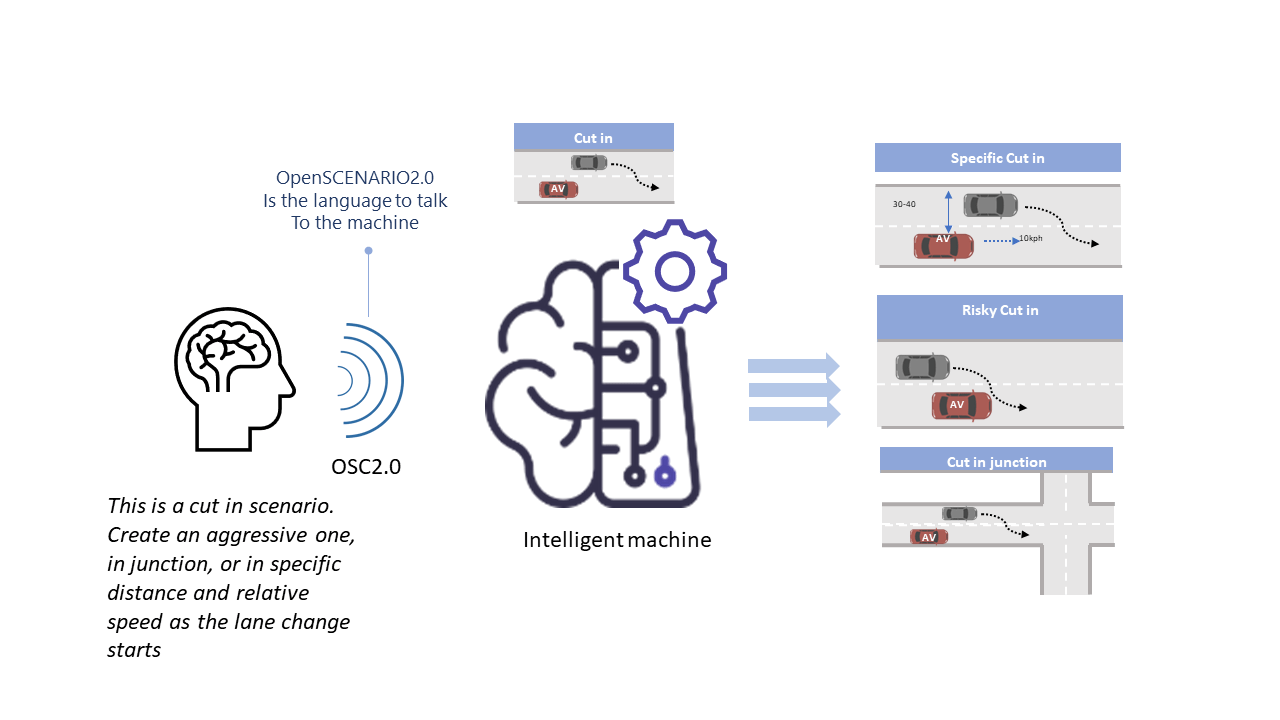

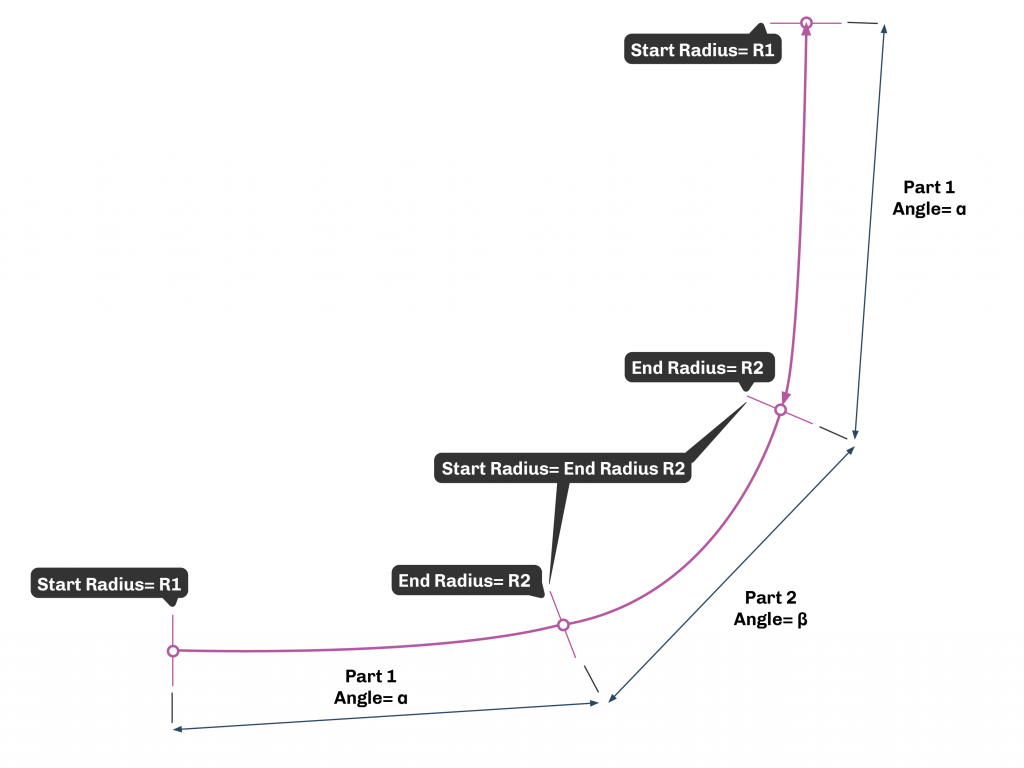

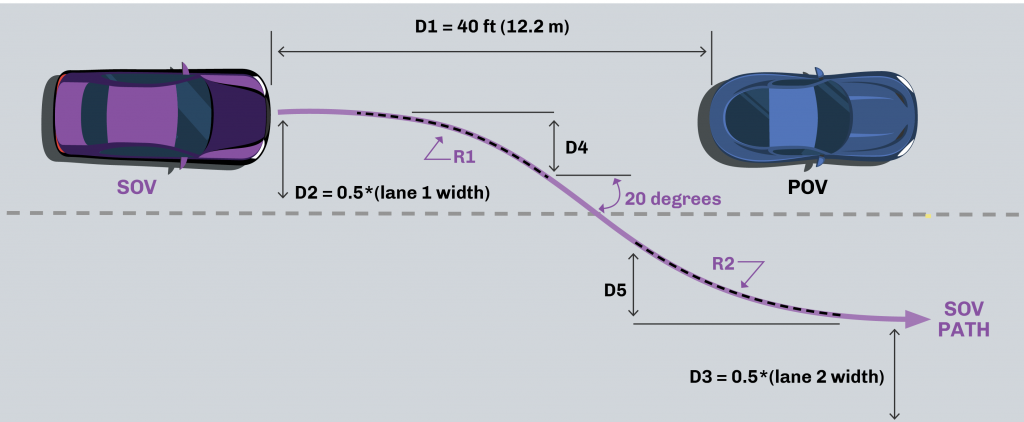

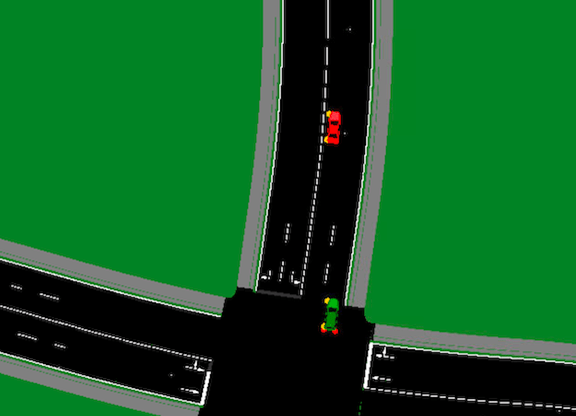

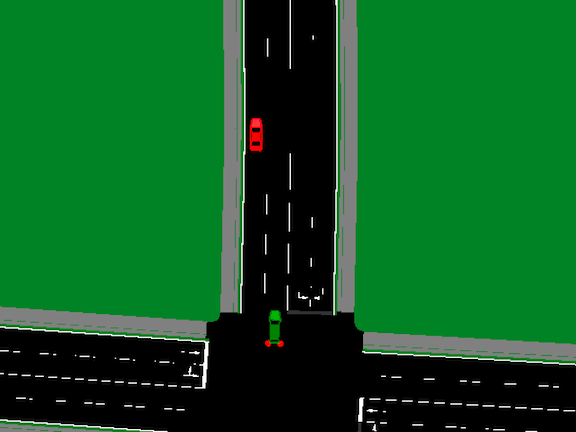

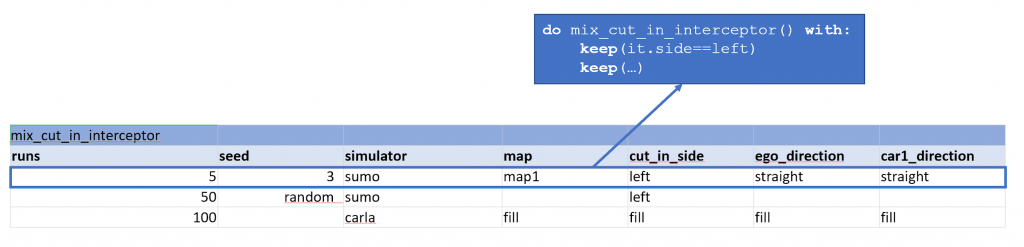

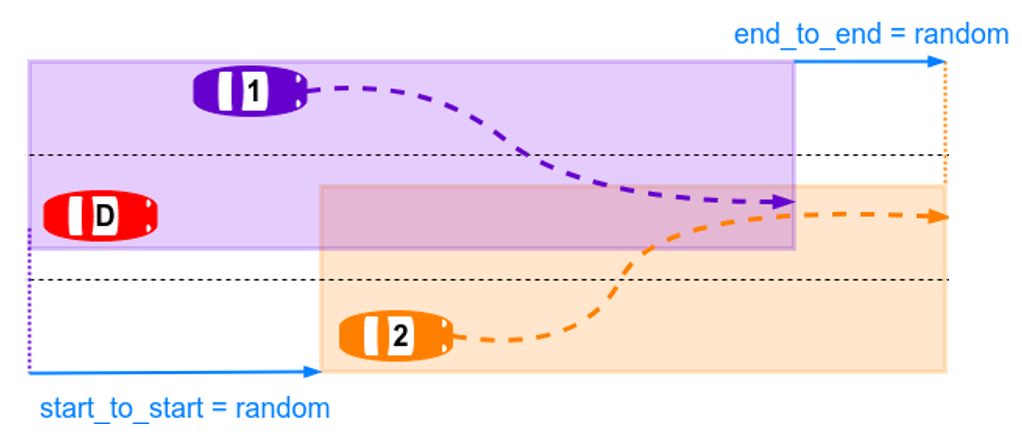

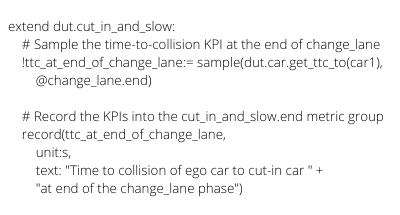

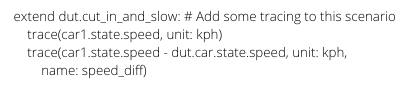

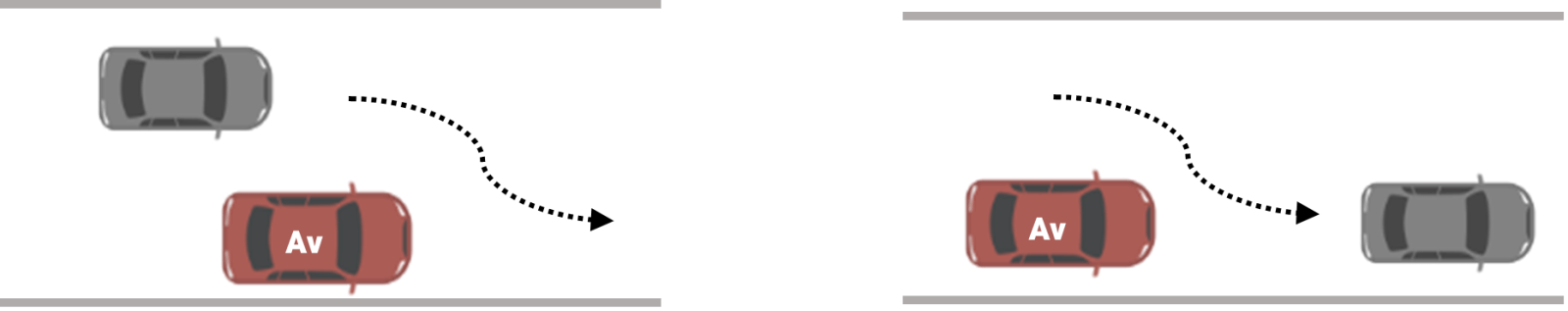

With concrete scenarios, all scenario attributes must be carefully calculated and manually assigned by the user. For example, set car1’s speed to 10kph and start the change lane maneuver in a specific XYZ location. (See Figure 2 for a visual image of the cut-in scenario.) All attributes, including maps, vehicle speeds, distances, accelerations, and maneuver locations, must be specified in a concrete scenario. The laws of physics impose multiple dependencies between the scenario set of attributes, for example, the distance is the product of speed and time, or the average acceleration equals speed difference divided by time. Users must also select the proper location according to the scenario’s nature and attributes. For example, at least two lanes are needed for the cut-in scenario, and various speed settings require road segments of various lengths. Failing to provide a consistent set of attributes or locations will likely result in a meaningless scenario with a loss of human and machine resources.

The next level of abstraction is the

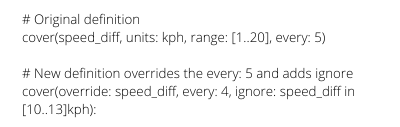

logical scenario. As with the previous abstraction level, the user must specify a set of locations and attributes, but there is one significant addition – individual attributes can be left unassigned within a fully specified range. For example, instead of hardcoding the speed to 45kph, a speed range between 10 to 90 kph can be provided. The value selection can originate from aggregated real-life data distributions or be biased to force challenging conditions. The assumption is that the tedious calculation involved with every concrete scenario will be eliminated, and scale will be reached. (More about this assumption below. ?)

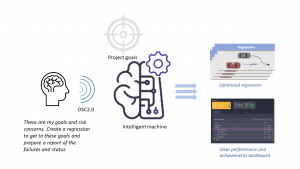

The automotive validation journey is a challenging process. Equipped with the requirements, test plan, and gut feeling, there is a need to span spaces and look between the cracks for expected and unexpected bugs. This is the main motivation for abstract scenarios.

Abstract scenarios allow total freedom for the user to express any desired dependency using any form of constraint, including ranges. Constraints can be:

- Cross attribute constraints – e.g., the NPC’s speed should be slower than the location’s legal speed.

- Abstract location constraints – e.g., create a cut-in near a junction. Note that the scenario does not force a specific junction but requires a junction nearby.

- Timing and execution constraint – g., the change lane should take place with half a second-time headway. There are multiple ways to implement such a request, but the user just wants to capture the timing requirement in a constraint.

- Control flow and a composition constraint – e.g., the scenario should combine the cut-in and oncoming

Abstract scenarios enable a goal-based approach in which the user can ask for any desired scenario (set the goal for the tool), trusting the tool to deliver multiple consistent results.

Functional scenarios are non-formal descriptions of the desired scenarios, including the goal, motivation, and intent. For example, a non-formal description in English may stipulate that to validate the Ego in cut-in scenarios, we should both force a maneuver from the Ego to avoid danger and ensure that the Ego does not get confused by the cut-in maneuvers. It may include images (such as figure 2 above) to illustrate the scenario to the test writer.

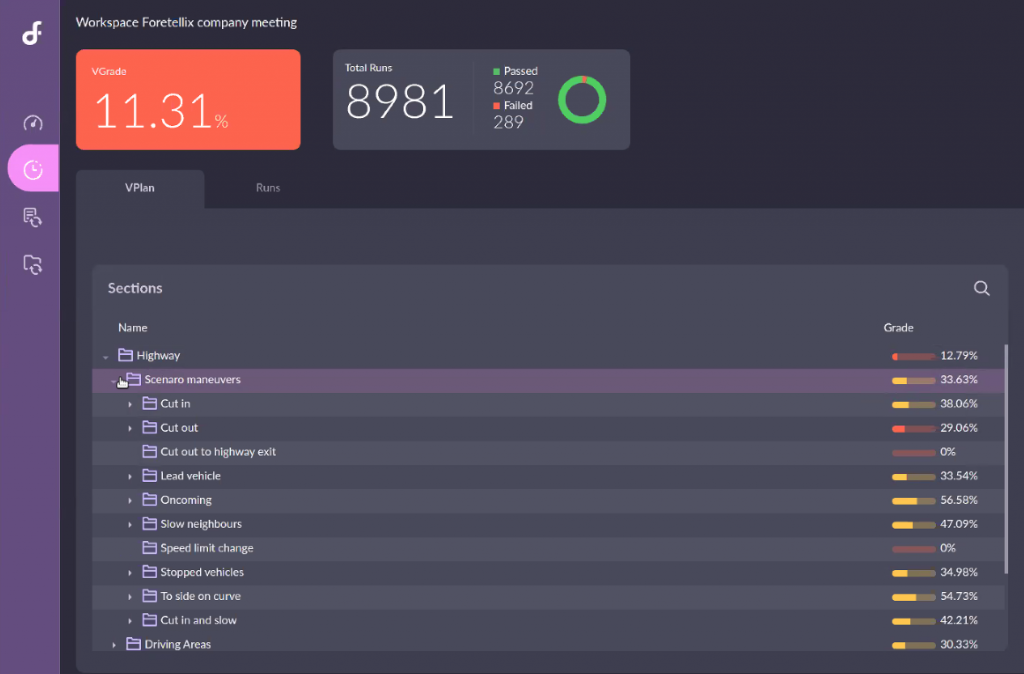

Why are users disappointed by logical scenarios? And what is required to fix that?

Logical scenarios are a significant improvement over concrete scenarios as they capture a desired scenario parameter space, but they are misleading to both users and vendors. Letting the tool select a speed between 10 to 90 kph requires the tool also to consistently adjust starting locations, other vehicle speeds, acceleration, maneuvers timing, and more. This is where Isaac Newton’s discoveries cannot be ignored – tools must be smart enough to propagate the speed selection to other attributes.

This misunderstanding is not a minor one. A project team’s hope of reaching the necessary large-scale test suite and replacing tedious manual effort falls apart due to this. Without a tool capable of proper inferences and propagation of values, logical scenarios may result in a massive number of meaningless scenarios.

Teams that plan to adopt optimization workflow (applying mathematical algorithms to gravitate towards risk) also get their share of disappointment. No automation has been available to take the optimizer value recommendations and create a consistent scenario around them. While some attributes, like time-of-day or road friction, might be independent of others, most scenario attributes are tightly connected and often in surprising ways.

The smart technology that makes the needed inferences is called a constraint solver; the industry now realizes that constraint solvers are critical for getting consistent logical scenarios or enabling optimization workflows. Even if the user does not specify constraints in his scenarios, the system needs to consider implicit constraints (like the laws of physics), which the constraint solver solves. By the way, this understanding is already proven to be mandatory in other industries, something that many of us at Foretellix have done for the last ~20 years.

What is the added value of abstract scenarios?

ASAM and VVM have abstract scenario levels for all the right reasons – abstract scenarios are game changers for scenario-based testing productivity and robustness.

An efficient testing workflow is not about throwing an endless number of scenarios, hoping they will expose bugs; it is a large-scale guided hunt following the test plan and intelligently exploring the implementation cracks. You need complete control over the generated scenarios (as well as other capabilities) to follow the test plan, and you need to combine randomness and the unknown. In your journey to span scenario space and find these bugs, you will constantly refine the scenarios and further direct them toward different areas of concern – abstract scenario controls are a required tool for the job, but it will still be hard work!

One of the most significant value propositions of abstract scenarios is the ability to aggregate generic V&V expertise within executable packages and to enable its use on multiple ODDs. Assume that you have defined a valuable concrete or logical cut-in scenario that challenges the sensitivity of an autonomous driving function. Such a scenario cannot be migrated to various project ODDs without manual adjustments. If it was designed for a sedan Ego, it might not be applicable for a truck with totally different dynamics. You need to manually adjust it to another map or move it from an urban to a highway setting. It must be modified for left-hand street driving if your ODD includes London. For development productivity, you need to combine scenarios modularly and have a technology that can resolve the new dependencies of the combined scenarios. For example, you need to be able to execute the cut-in and oncoming scenarios at the same time and have a tool that can find locations appropriate for both these scenarios. Modeling for adaptability, extensibility, and modularity are some of the basic mandatory capabilities that SW projects cannot do without. These capabilities are finally available for scenario-based testing in the form of abstract scenarios.

At the risk of being repetitive, abstract scenarios also depend on the availability of a constraint solver. The need here is evident because the ODD and scenario constraints are explicit and user-defined, unlike the implicit law of physics and vehicle dynamics constraints.

So which abstraction layer should I use while coding my scenarios?

All abstractions levels might be appropriate based on the requirement and use case. Nevertheless, here are a few essential guidelines.

- The OSC2 scenario abstraction should be equivalent to or above the requirement specification abstraction. For example, if the requirement asks for a cut-in and lists all scenario attributes, a concrete scenario might be the solution. However, a better choice might be to create an abstract generic cut-in scenario and assign concrete values on top of it. This will be the most effective and productive way to develop and maintain your scenario catalog.

- Be strategic and see beyond a specific project or ODD’s needs. With the OSC2 language and solver technology, you can create or adopt generic V&V packages. For example, it is highly desired not to hardcode scenarios to a specific map or a location. This means that pure logical scenarios should be discouraged.

- Scenario abstraction is typically modified as the project progresses, and new realizations are formed. You may initially assume that a logical scenario with specific ranges is needed (ignoring the guideline above?) and later discover additional ODD needs (e.g., vehicle dynamics constraints or location constraints). Enhancing the scenario with such considerations will turn the logical scenario into an abstract one. This means that your toolchain should support all abstractions to maximize control and development efficiently.

Most of the industry acknowledges the need for a massive amount of intelligent, meaningful scenarios. The way to replace the tedious manual work is by defining scenarios in high-level terms and leveraging the constraint solver’s automation to produce known and unknown edge cases. Logical scenarios combined with a constraint solver provide a first level of abstraction. One source of users’ frustration is that tool vendors claim to support logical scenarios but do not provide the necessary solver technology. That being said, only abstract scenarios allow the refined control and reuse required for real-life project productivity and safety. If you wish to know more about abstraction or explore Foretellix’s unique scenario-creation technology, give us a shout at info@foretellix.com.

As usual, drive safe,

Sharon

) comes

) comes